west

[ˈwɛst]

1

west

noun

- the direction where the sun sets : the direction that is the opposite of east

- regions or countries west of a certain point: such as

- the western part of the U.S.

2

west

adjective

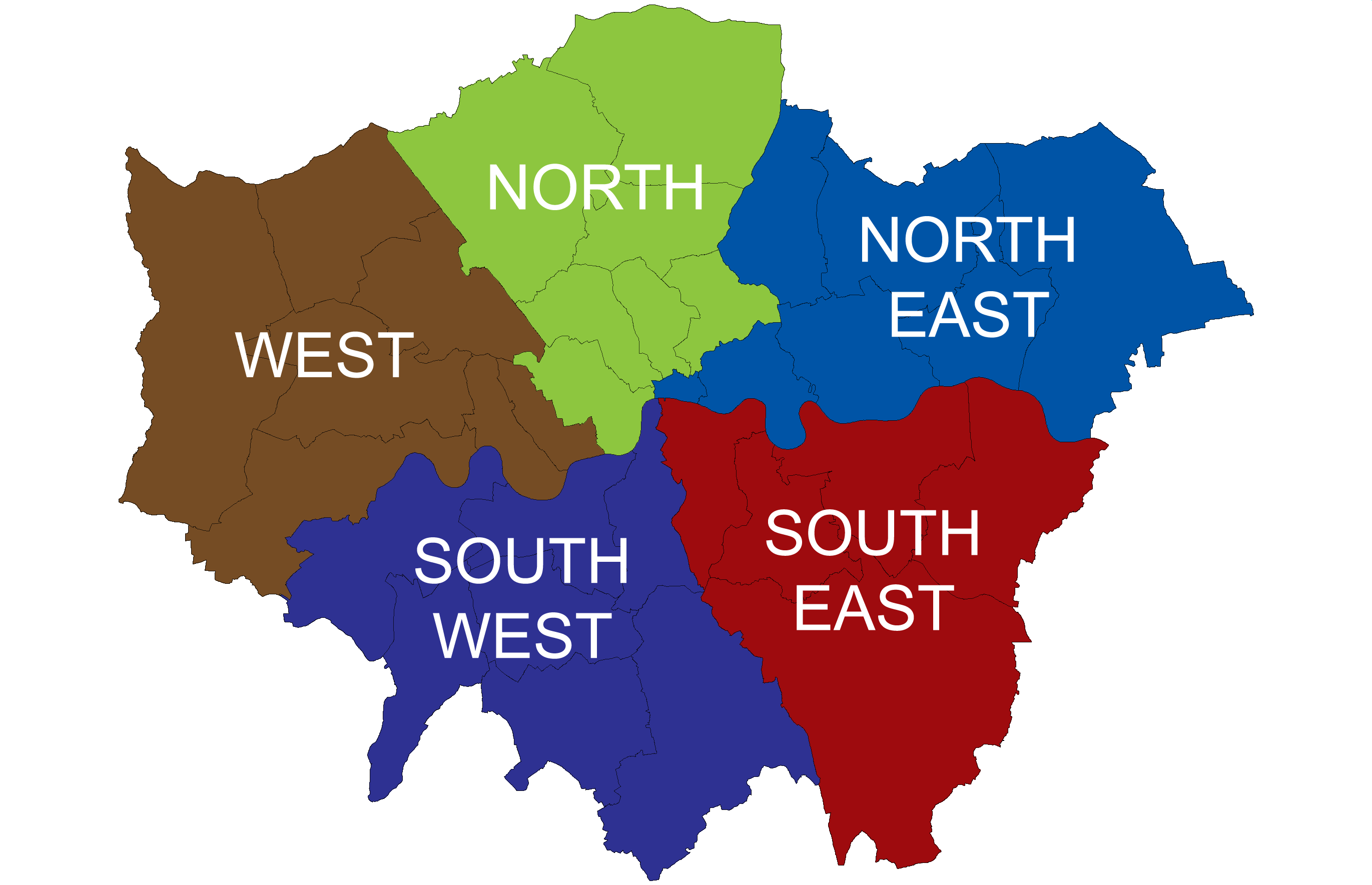

- located in or toward the west

- coming from the west

3

west

adverb